The discussion about AI 3D generation has, until recently, focused almost entirely on the user experience — the browser interface, the prompt box, the rotatable preview. That conversation has been useful for understanding the technology but has obscured a more consequential question for businesses building commerce, content, or fulfillment products: what happens when 3D generation becomes a service called from code rather than a tool driven by a person? The answer is that several large categories of business operations look meaningfully different when 3D generation is an API call instead of a manual workflow.

The shape of an API-first 3D pipeline

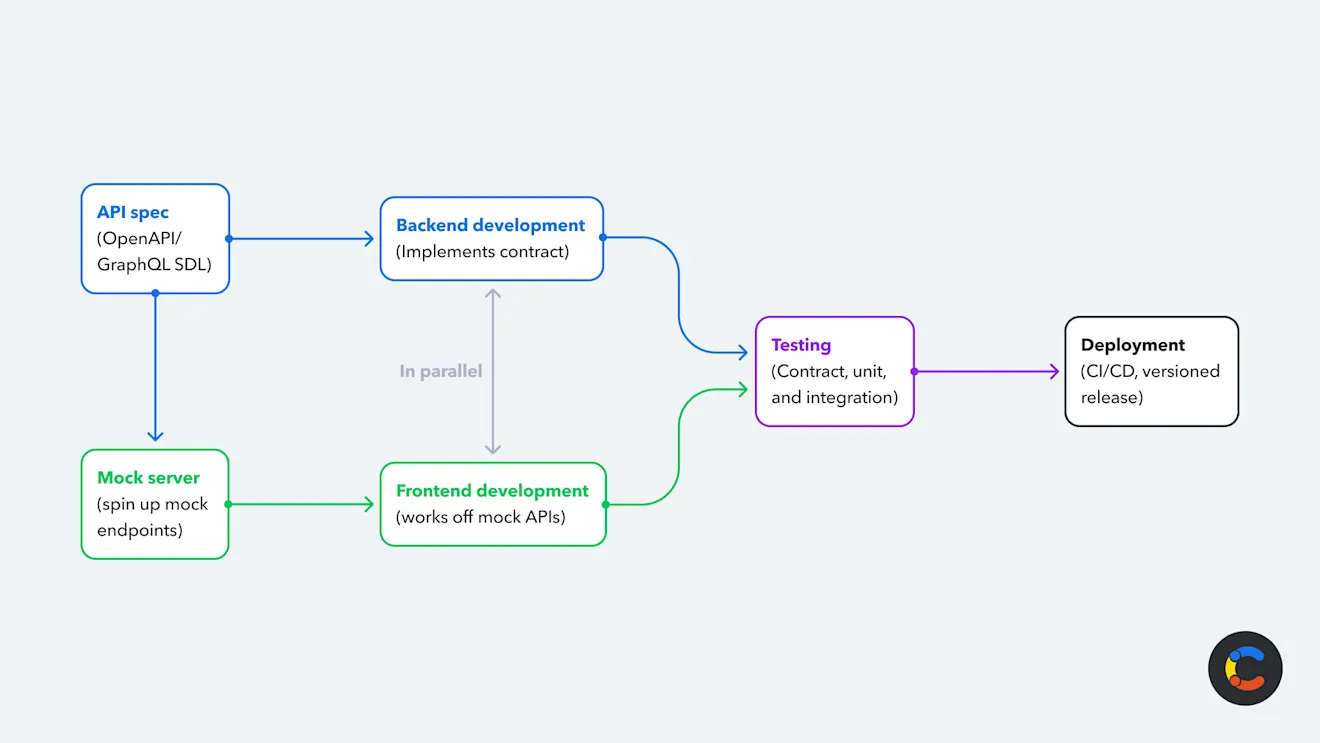

An API-first approach to 3D generation collapses the asset-creation phase into a piece of automated infrastructure. A product manager at an e-commerce company doesn’t open a 3D tool and click through generations. The company’s product data pipeline takes a SKU image, sends it to a generation endpoint, receives a 3D model file, validates the output, and pushes the result to the storefront’s media CDN. The whole process happens on a schedule, against a queue, without a human in the loop.

That shift is why platforms like 3D AI Studio, which expose their generation engines through REST APIs alongside a browser interface, are showing up in commerce engineering conversations more frequently than the consumer-facing surface area would suggest. The browser tool sells the platform. The API integrates it into the systems that actually run businesses.

What changes for product catalogs

The most immediate impact is on commerce companies with large catalogs. A retailer with twenty thousand SKUs has, historically, faced a binary choice on 3D content. Build it for hero products and accept flat imagery for the long tail, or invest tens of millions of dollars in a 3D production effort that takes years. Neither option scales gracefully. With an API-first generation pipeline, the calculation changes. The cost of generating a single 3D model drops to single-digit cents and the throughput is bounded by API rate limits rather than human production capacity.

The result is that the long tail of the catalog — the 19,800 products that have always been imagery-poor — can move into 3D economically for the first time. Conversion lifts that retailers have measured on hero-product 3D experiences become applicable across the entire catalog. The structural disadvantage of long-tail products versus heroes, in terms of media quality, narrows.

The print-on-demand economics

Print-on-demand operations are the other category where API-first 3D meaningfully changes the business model. A PoD service offering custom figurines, miniatures, or printed objects has, historically, depended on a library of pre-modeled designs. The marginal cost of adding a new design to the catalog has been the cost of modeling the design, which has been a meaningful per-design investment. PoD operators have managed this by either limiting catalog size or building their business model around community-uploaded designs.

API-first 3D generation enables a different model. A customer uploads a photo. A backend pipeline calls a generation API. The resulting 3D model is automatically prepared for printing — scaled, repaired, oriented for the slicer — and queued for production. The customer experience is “send a photo, receive a printed object.” The operator’s catalog, in effect, is infinite. The economic structure of the business shifts from inventory of designs to throughput of generations.

Several PoD operators have launched offerings on this model in 2026. Custom pet figurines from photos. Personalized memorial objects. One-off architectural models from photographs of buildings. The margins are reportedly excellent, partly because the marginal cost of generating each unique design is closer to a vending-machine transaction than to a creative-services engagement.

What businesses are integrating, and how

The integration patterns themselves are not exotic. The 3D generation API call sits in the same layer of the stack as image processing APIs, transcription services, and other common third-party integrations. The endpoints accept either an image upload or a text prompt, return a job ID, and either return the model file synchronously for fast-tier generation or via webhook for higher-quality generation that takes a few minutes.

The validation layer is where engineering effort tends to concentrate. A business calling the API at scale needs to verify that returned models meet the requirements of downstream systems — polygon count within bounds, watertight geometry for printing applications, valid PBR material structure for rendering applications. The better generation platforms now expose this validation as part of the API response, but most businesses still build their own quality-check layer to handle edge cases.

The other significant engineering investment is around prompt and image preprocessing. The quality of generated output depends materially on the quality of the input. Businesses calling the API at scale typically build a preprocessing layer that normalizes images, generates consistent prompts from product metadata, and handles retry logic for cases where the first generation isn’t acceptable.

Where this goes in 2027

The likely trajectory for API-first 3D is toward becoming a normal layer of the commerce stack — sitting alongside payments, fulfillment, search, and recommendation as a service that platforms call without thinking much about it. The current state, where companies still treat 3D generation as a special integration project, is a transitional one. The companies that are building this capability into their products now are doing it in front of competitors who will be doing the same thing in twelve to eighteen months.

For founders, ops leads, and engineers thinking about where 3D generation fits in their product roadmap, the practical recommendation is to prototype the integration now even if the production rollout is a quarter or two away. The capability is mature enough to build against. The cost structures are predictable enough to model. The engineering work to integrate it is real but bounded. And the businesses that have done this work first are showing, in the metrics they share, that the integration was a competitive lever rather than a research project.