Modern software is increasingly defined by how effectively it integrates intelligence rather than how much code is written by hand. Over the past few years, AI model APIs have moved from experimental tools to foundational components that shape how products are designed, deployed, and scaled. Among these,Claude sonnet 5 has become a commonly referenced baseline for teams evaluating general-purpose model capabilities in real production environments. This shift signals a deeper change in how developers, startups, and enterprises think about long-term system architecture. Understanding where AI model APIs are headed is now a strategic requirement rather than a technical curiosity.

AI APIs as the New Software Infrastructure

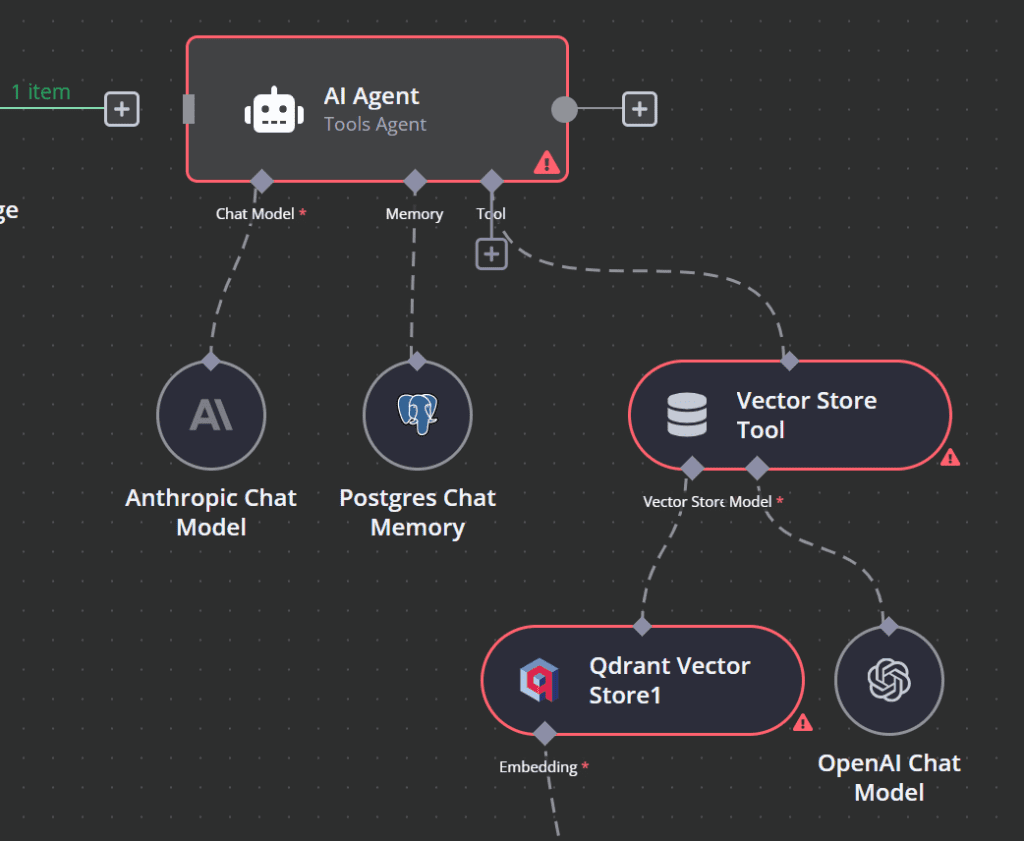

Software infrastructure has historically evolved in layers, from hardware abstraction to operating systems and from databases to cloud platforms. AI model APIs now sit alongside these layered systems of infrastructure that provide cognitive capability on demand. Instead of building logic entirely through deterministic rules, teams increasingly rely on models to interpret language, reason over context, and generate structured outputs dynamically.

What makes AI APIs infrastructural is their repeatability and reliability at scale. A single integration can power customer support systems, internal analytics tools, developer assistants, and decision support workflows. This mirrors how databases or cloud computing were once adopted, initially as optional enhancements and later as nonnegotiable components of modern stacks.

As this layer matures, architectural decisions around AI APIs start to resemble earlier infrastructure choices. Teams consider latency, reliability, observability, and long-term vendor stability rather than surface-level features. The model itself becomes part of the system contract, influencing how software behaves under real conditions.

How Developers Evaluate Model Capabilities

Developers approach AI model APIs with a practical mindset shaped by production constraints. Early experimentation often focuses on output quality, but sustained usage quickly shifts attention toward consistency, predictability, and integration complexity. A model that performs well in isolated prompts but behaves unpredictably under load introduces risk into the system.

Evaluation typically begins with hands-on testing in realistic workflows. Developers examine how models respond to messy input, partial context, or ambiguous instructions. They measure latency across different usage patterns and assess how well responses align with expected formats without constant prompt tuning.

Another key factor is how the model fits into existing tooling. Logging, error handling, and versioning matter because AI outputs are no longer peripheral features but core application logic. As a result, developers treat model APIs less like experimental libraries and more like infrastructure services that must be monitored and governed over time.

Startup Adoption Patterns for AI APIs

Startups often adopt AI model APIs earlier and more aggressively than larger organizations. Speed to market and differentiation are critical, and AI capabilities can compress development timelines dramatically. Instead of building complex systems from scratch, startups use model APIs to prototype features, validate demand, and iterate quickly based on user feedback.

This agility, however, comes with tradeoffs. Startups must balance rapid experimentation with future scalability. An early model choice can shape product behavior and cost structures long after initial traction is achieved. Founders therefore look beyond immediate performance and consider how a model might support evolving use cases as the product matures.

AI APIs also influence startup team composition. Smaller engineering teams can achieve outsized impact when model capabilities replace entire categories of manual logic. This shifts focus toward system design, data flow, and user experience rather than low-level algorithmic implementation.

Enterprise Requirements for Long-Term AI Systems

Enterprises approach AI model APIs with a different set of priorities driven by scale, governance, and risk management. While innovation is important, stability and compliance often dominate decision-making. AI systems in enterprise contexts may influence financial decisions, operational workflows, or customer-facing communications, amplifying the consequences of failure.

Long-term support and transparency matter deeply. Enterprises need confidence that models will not change behavior unpredictably or be discontinued without notice. They also require clear documentation, auditability, and mechanisms for validating outputs against internal standards.

Integration complexity is another critical concern. AI APIs must align with existing identity systems, data pipelines, and security frameworks. As AI becomes embedded across departments, enterprises increasingly treat model selection as an architectural decision comparable to choosing a core database or cloud provider.

How Claude Sonnet 5 Fits General Purpose Needs

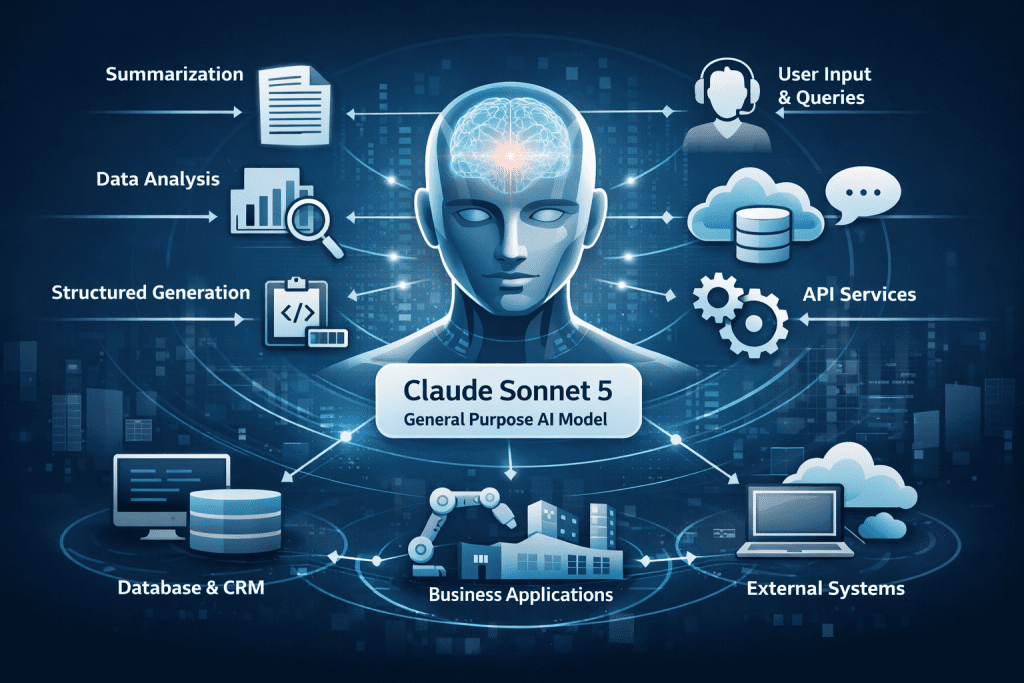

General-purpose models play a central role in AI driven systems because they handle a wide range of tasks without heavy customization. Claude Sonnet 5 is often positioned in this category due to its balance between reasoning ability, responsiveness, and adaptability across domains.

Teams working with diverse workflows value models that can shift context smoothly, moving from summarization to analysis to structured generation within a single session. This flexibility reduces the need for multiple specialized integrations and simplifies system design.

In practice, general-purpose models are frequently used as orchestration layers. They connect user input to downstream services, interpret intent, and coordinate responses across multiple components. Their role is less about solving narrow problems and more about enabling fluid interaction between humans and software systems.

Deep Reasoning and Claude Opus 4.6

As systems grow more complex, the limits of general-purpose models become apparent in scenarios that demand sustained reasoning across large contexts. This is where Claude opus 4.6 is commonly referenced as a benchmark for deep reasoning workloads.

High-context reasoning is particularly valuable in enterprise analysis, legal review, technical documentation, and multi-step planning tasks. These use cases require the model to maintain coherence across extended inputs while drawing accurate conclusions that align with domain constraints.

Rather than replacing general models, reasoning-focused models complement them. Architects often design systems where simpler tasks are handled by lighter models while complex decision paths are routed to deeper reasoning engines. This layered approach reflects how human teams delegate work based on complexity and expertise.

Engineering Accuracy with GPT 5.3 Codex

Software engineering workflows present a distinct set of requirements centered on precision and correctness. In these contexts, gpt 5.3 codex is frequently used as a reference point for code centric accuracy and structured reasoning.

Engineering tasks demand outputs that adhere strictly to syntax, logic, and system constraints. A small error can break a build or introduce subtle bugs that are difficult to trace. Models optimized for code understanding and generation therefore prioritize determinism and clarity over conversational flexibility.

Within modern development pipelines, such models support tasks like code review, refactoring, test generation, and documentation alignment. Their value lies not in replacing developers but in reducing cognitive load and accelerating routine tasks while preserving engineering standards.

Designing Future Proof AI Architectures

Looking ahead, the future of AI model APIs is defined less by individual model releases and more by architectural patterns that accommodate change. Models will continue to evolve, but systems designed with modularity and abstraction can adapt without major rewrites.

Future-proof architectures treat AI models as interchangeable components behind stable interfaces. This allows teams to experiment with new capabilities while maintaining consistent application behavior. Observability and evaluation pipelines become essential, enabling continuous assessment of model performance against real metrics.

Ultimately, AI model APIs are shaping a new paradigm of software development where intelligence is a shared service rather than a bespoke feature. Developers, startups, and enterprises that understand this shift can design systems that remain resilient as models improve and use cases expand. The organisations that succeed will be those that view AI not as a shortcut, but as infrastructure that deserves the same rigor and foresight as any other foundational technology.