Introduction

The question of who invented the first digital computer is a journey into a shadowy battlefield of genius, war, and legal warfare. It is not a simple story with one clear hero. For decades, the title was confidently awarded to the ENIAC, a massive machine unveiled to the public in 1946. However, a stunning court ruling in 1973 shattered this narrative, revealing a hidden pioneer whose work was shockingly appropriated. To understand the origin of the machine that now dictates our lives, we must go beyond the popular myths. We are about to uncover the brilliant, and often controversial, history of the first digital computer. This exploration will reveal a tale of intellectual property theft, the urgent demands of World War II, and the foundational concepts that still power your smartphone today.

The story begins not with a single flash of inspiration, but with a desperate need to solve complex mathematical problems. For centuries, humanity relied on mechanical calculators, devices of gears and levers. But the dawn of the electronic age promised a revolution. The first digital computer was the embodiment of that promise, a leap from the mechanical to the electronic, from the slow and error prone to the fast and precise. Its invention was not merely a technical achievement; it was a philosophical shift. It proved that a machine could manipulate symbols, not just numbers, and could follow a set of instructions to perform any logical task. This concept, born in the minds of a few extraordinary individuals, would go on to reshape the entire world.

Who invented it

For years, the inventors credited with the first digital computer were John Mauchly and J. Presper Eckert. Their creation, the Electronic Numerical Integrator and Computer (ENIAC), was unveiled to the public in 1946 to thunderous applause. It was a behemoth of vacuum tubes, a tangible symbol of American technological prowess. The names John Mauchly and J. Presper Eckert became synonymous with the dawn of the computing age. They were the celebrated inventors, and their machine was hailed as the first electronic programmable computer.

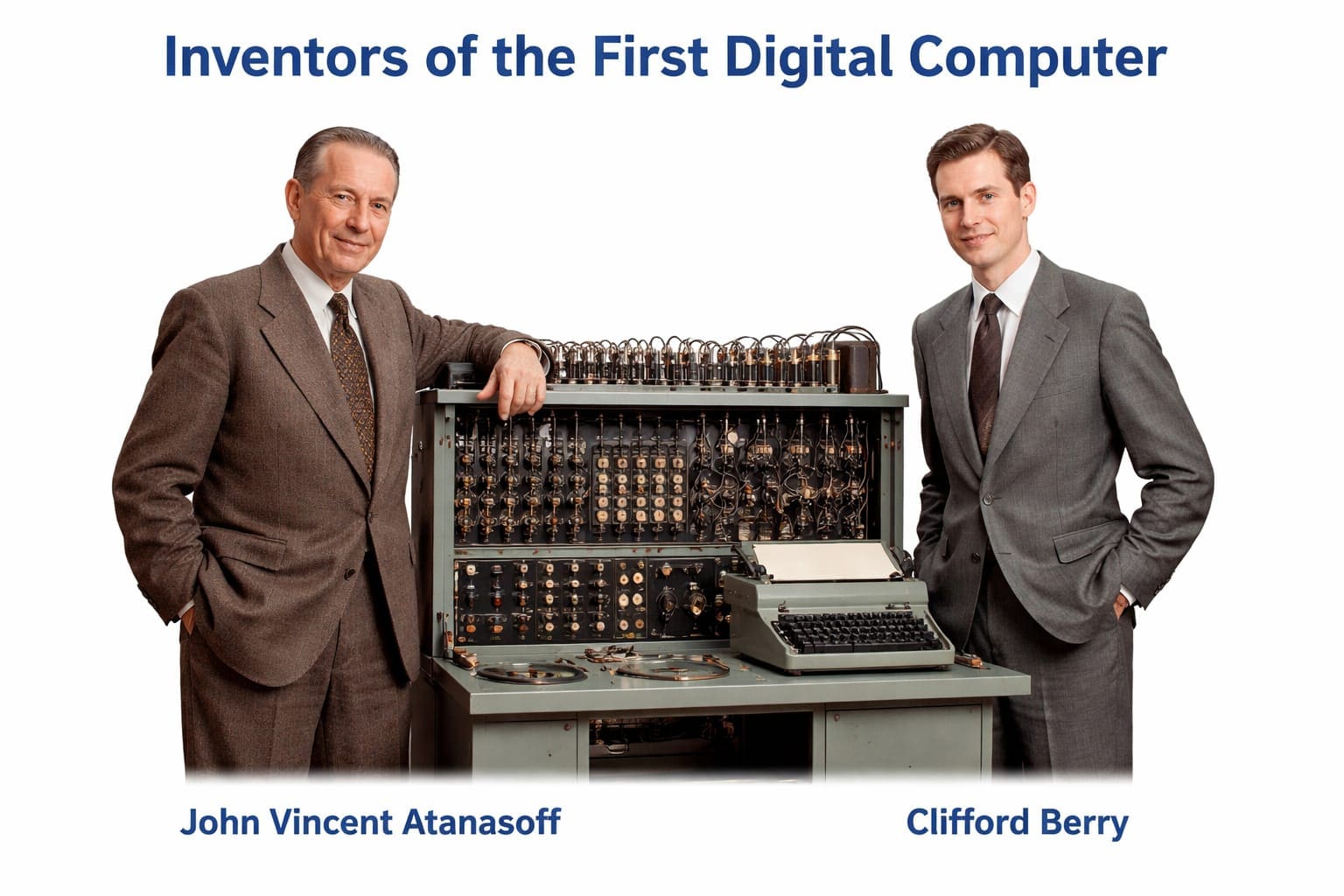

But a deeper, more startling truth lay buried in the cornfields of Iowa. The real visionary behind the first digital computer was a man named John Vincent Atanasoff. A professor of physics and mathematics at Iowa State College, Atanasoff grew frustrated with the slow pace of his graduate students’ calculations. In the winter of 1937, after a desperate drive to Illinois, he had a revelation in a roadside bar. He envisioned a machine that would use binary digits (bits) instead of decimal numbers, a radical departure from all previous calculating devices. He would use electronic vacuum tubes for speed and a new method of memory regeneration. This vision led him to create the Atanasoff-Berry Computer (ABC).

Along with his brilliant graduate student, Clifford Berry, John Vincent Atanasoff built the ABC between 1939 and 1942. It was a purpose built machine, a prototype designed to solve systems of linear equations. While it was not a fully general purpose computer in the modern sense, it was the first digital computer to incorporate three fundamental principles: electronic switching using vacuum tubes, binary arithmetic, and a separation of computing and memory functions. The ABC was a proof of concept, a silent revolution that predated the famous ENIAC. In a landmark 1973 legal decision, the patent for the electronic digital computer was invalidated, and John Vincent Atanasoff was legally declared the inventor. The history of computer hardware begins in earnest with his groundbreaking, and for too long overlooked, invention.

When it was invented

The timeline of the first digital computer is a subject of intense debate, precisely because it hinges on the definition of “computer.” If we define it as the first fully electronic, general purpose, programmable machine, the ENIAC takes the crown, with its public reveal in 1946. The project began in 1943 at the University of Pennsylvania Moore School, funded by the U.S. Army. It was a massive undertaking, aimed at calculating artillery firing tables. The ENIAC became operational late in 1945, just as World War II was ending.

However, this date is contested by a machine built in the shadows of wartime Britain. The Colossus computer World War II was a series of secret electronic computers designed by the British codebreakers at Bletchley Park. World War II codebreaking was the driving force behind Colossus. The first Colossus became operational in December 1943, a full two years before ENIAC. It was used to decipher the Lorenz cipher, a high level German code. It was electronic, programmable via switches and plugs, and was a stunning achievement. But Colossus was a secret, its very existence classified for decades. Its role in the invention of the first digital computer was erased from history until the 1970s.

If we look for the first functional, program controlled computing machine, regardless of being electronic, we must travel to Germany. Konrad Zuse Z3 was completed in 1941. It was an electromechanical machine, using telephone relays instead of vacuum tubes. While not fully electronic, the Z3 was a groundbreaking machine and is considered the world’s first working program controlled computer. Yet, it was destroyed in a bombing raid in 1943.

Going back even further, the foundational concept came from a mathematician. In 1936, Alan Turing published his paper on the Universal Turing Machine. This was not a physical machine but a mathematical model, a theoretical blueprint that defined what a computer could be. It laid the groundwork for the entire field of computer science. And before that, the 19th century visionary Ada Lovelace and Charles Babbage worked on the Analytical Engine, a mechanical, general purpose computer that was never built in their lifetimes. So, the answer to “When was the first digital computer invented?” is not a single year. It is a constellation of years: 1937 for its theoretical birth, 1941 for the Z3, 1942 for the ABC, 1943 for Colossus, and 1946 for ENIAC. But for the first electronic digital computer, the historical and legal honor goes to 1942, the year the ABC was demonstrated.

How it worked

Understanding how the first digital computer worked requires us to move away from the mechanical world of gears and toward the electronic world of switches. The ABC, the Atanasoff-Berry Computer (ABC), was a marvel of minimalist engineering. Its operation was based on three core principles that are still the bedrock of modern computing.

First, it used binary digits (bits). Instead of counting from 0 to 9 like a human, the ABC represented all numbers as a series of 1s and 0s. This binary system is the natural language of electricity: on or off. This made computation incredibly fast and reliable. Second, it used vacuum tube technology in early computers. The ABC contained approximately 300 vacuum tubes. These tubes acted as electronic switches, performing calculations in a fraction of the time it took electromechanical relays. This was the critical innovation that made the first digital computer truly electronic.

Third, and most revolutionary, it separated the functions of computing and memory. The ABC had a “logic unit” for processing and a separate “regenerative memory” unit. Its memory was a novel system of rotating drums, each with thousands of capacitors that stored binary digits (bits) . Because capacitors would leak their charge, the ABC had a built in “refresh” mechanism, a concept used in every DRAM chip today. The machine was not a general purpose computer in the sense that it was built for a specific task: solving systems of up to 29 simultaneous linear equations. To operate it, a user would input numbers using punch cards and watch the results appear on a second set of punch cards. It was a dedicated, powerful, and elegantly simple machine.

In contrast, the ENIAC, which came later, was a brute force giant. It used thousands more vacuum tubes and was programmed by physically plugging cables and setting switches, a process that could take days. The history of operating system was still decades away, but the core concepts of ENIAC, like its ability to be reprogrammed for different tasks, were a leap forward. Yet, it was the ABC’s pioneering use of logic gates and circuits with binary electronics that established the foundational architecture. The John von Neumann architecture, which describes a computer where the program and data share the same memory, would later become the standard, but the ABC’s separation of memory and processing was a crucial precursor. The first digital computer was a proof of concept that electricity, guided by binary logic, could solve complex problems faster than any human.

Why it was important

The importance of the first digital computer cannot be overstated. It was a paradigm shift, a leap from the industrial age to the information age. Its creation was the pivotal moment when mathematics, physics, and engineering converged to create a machine that could extend the human mind. It transformed computation from a slow, labor intensive process performed by human “computers” (often women with mechanical calculators) into an instantaneous electronic operation. This single shift unlocked possibilities that were previously unimaginable.

Firstly, it was a decisive factor in World War II. The Colossus computer World War II directly aided the Allied victory by breaking German codes, providing invaluable intelligence. The ABC, while not used in the war, proved the concept of electronic binary computing. The ENIAC, though completed after the war, demonstrated the immense potential for scientific and military calculation, such as the development of the hydrogen bomb. The first digital computer was forged in the crucible of global conflict, proving its worth as a strategic asset of the highest order.

Secondly, it gave birth to a new field of science. The existence of the first digital computer forced researchers to think about computational complexity, how to design algorithms, how to build logic gates and circuits, and how to create the history of operating system. It moved Ada Lovelace and Charles Babbage’s 19th century vision from the theoretical to the tangible. The principles of parallel processing, first explored in ENIAC where multiple units worked simultaneously, became a cornerstone for achieving high performance in later supercomputers.

Finally, it sparked a revolution that continues to this day. Every modern device, from the smartphone in your pocket to the largest server farm, is a descendant of that first digital computer. It validated the concept of the Universal Turing Machine, proving that a machine could be built to execute any computable algorithm. It paved the way for the stored-program concept, a key innovation in the EDVAC (a successor to ENIAC) which allowed programs to be stored in memory like data. This led directly to the development of commercial computers, then personal computers, and finally the interconnected digital world we inhabit. The first digital computer was not just a machine; it was the seed from which our entire technological civilization has grown.

How it evolved today

The journey from the first digital computer to the device you are likely reading this on is a story of relentless miniaturization, exponential growth in power, and a fundamental expansion of what a computer is. The ABC, with its 300 vacuum tubes filling a room, could solve a specific type of math problem. The ENIAC, with its 18,000 tubes, could be reprogrammed but was massive, consumed immense power, and was notoriously unreliable. Today, a smartphone has millions of times more computing power, fits in your pocket, and is more reliable than any machine of the 1940s.

The evolution began with the replacement of vacuum tubes with transistors in the 1950s. This was the first great leap, making computers smaller, faster, and far more energy efficient. The next leap was the integrated circuit, or microchip, in the 1960s. This allowed thousands of transistors to be placed on a single silicon chip. The history of computer hardware then followed Moore’s Law, the observation that the number of transistors on a chip would double roughly every two years. This led to the microprocessor in the 1970s, a computer on a single chip, which made personal computing possible.

This evolution also transformed the relationship between humans and machines. The history of operating system began with simple monitor programs to manage the hardware. It evolved into complex systems like Windows, macOS, and Linux, which manage multitasking, memory, and provide a user friendly interface. The first digital computer required a team of engineers to program it by plugging cables. Today, we have intuitive graphical interfaces and high level programming languages that allow a single developer to create complex applications. The concept of how much storage did the first computer have is almost laughable by today’s standards. The ABC had memory for 30 numbers, while ENIAC’s memory was a few hundred bytes. Today, a single microSD card can hold terabytes of data, billions of times more storage.

Furthermore, the evolution has expanded the definition of a computer. The first digital computer was a single, isolated machine. Today, we have a global network of billions of computers. Parallel processing, a concept explored in ENIAC, is now standard in everything from video game consoles to the world’s most powerful supercomputers, which use thousands of processors working in tandem. The stored-program concept has evolved into complex software ecosystems, and the John von Neumann architecture remains the dominant paradigm, though it is now being challenged by new models like quantum computing. From solving equations in a basement to powering artificial intelligence and connecting the globe, the evolutionary arc of the first digital computer is the defining technological narrative of the last 80 years.

Frequently Asked Questions (FAQs)

What was the name of the first digital computer legally recognized?

The Atanasoff-Berry Computer (ABC) , invented by John Vincent Atanasoff and Clifford Berry, was legally declared the first electronic digital computer in the 1973 court case Honeywell v. Sperry Rand.

How much storage did the first computer have?

The ABC’s memory could store about 30 numbers, each of 50 binary digits. To understand the scale, consider how much storage did the first computer have; it was less than the text of a single email. The ENIAC, its successor, had the equivalent of only a few hundred bytes.

What was the main difference between the ABC and ENIAC?

The ABC was a special purpose machine designed to solve linear equations, while the ENIAC was a general purpose machine that could be reprogrammed for a wide variety of tasks. However, the ABC was the first to use electronic vacuum tubes for switching and binary arithmetic.

Who are the main figures in the history of computer hardware?

Key figures include Ada Lovelace and Charles Babbage for the conceptual Analytical Engine; John Vincent Atanasoff for the ABC; John Mauchly and J. Presper Eckert for ENIAC; Alan Turing for the Universal Turing Machine; and Konrad Zuse for the Z3.

Conclusion

The story of the first digital computer is a powerful reminder that innovation is rarely a single moment of triumph. It is a complex tapestry woven from brilliant insights, desperate wartime needs, and human ambition. While the public may still associate the dawn of computing with the massive ENIAC, the truth, revealed through legal scrutiny, points to the quiet genius of John Vincent Atanasoff and his Atanasoff-Berry Computer (ABC) . This machine, built in a basement at Iowa State College, was the first to harness the power of binary digits (bits) and electronic vacuum tubes in a functional computing device. It laid the essential foundation upon which all subsequent machines were built.

From that pioneering work, the technology evolved rapidly, shaped by the contributions of countless others. The Colossus computer World War II showed its potential in global conflict. The ENIAC popularized the concept of a programmable, general purpose machine. The John von Neumann architecture provided a blueprint for the stored-program computer, leading to the EDVAC and beyond. Each step built upon the last, accelerating the pace of innovation and shrinking the size of the machines until they became a ubiquitous part of our lives.

Today, we stand on the shoulders of these giants. The device you are using now is a testament to the vision of John Vincent Atanasoff, the engineering of J. Presper Eckert and John Mauchly, and the theoretical genius of Alan Turing. The first digital computer was more than a machine; it was the beginning of a new era. Understanding its true origins gives us a deeper appreciation for the power we now hold in our hands and the incredible journey of human ingenuity that made it all possible. The digital world is not a product of a single moment, but the result of a collective, often contentious, and undeniably brilliant history of computer.