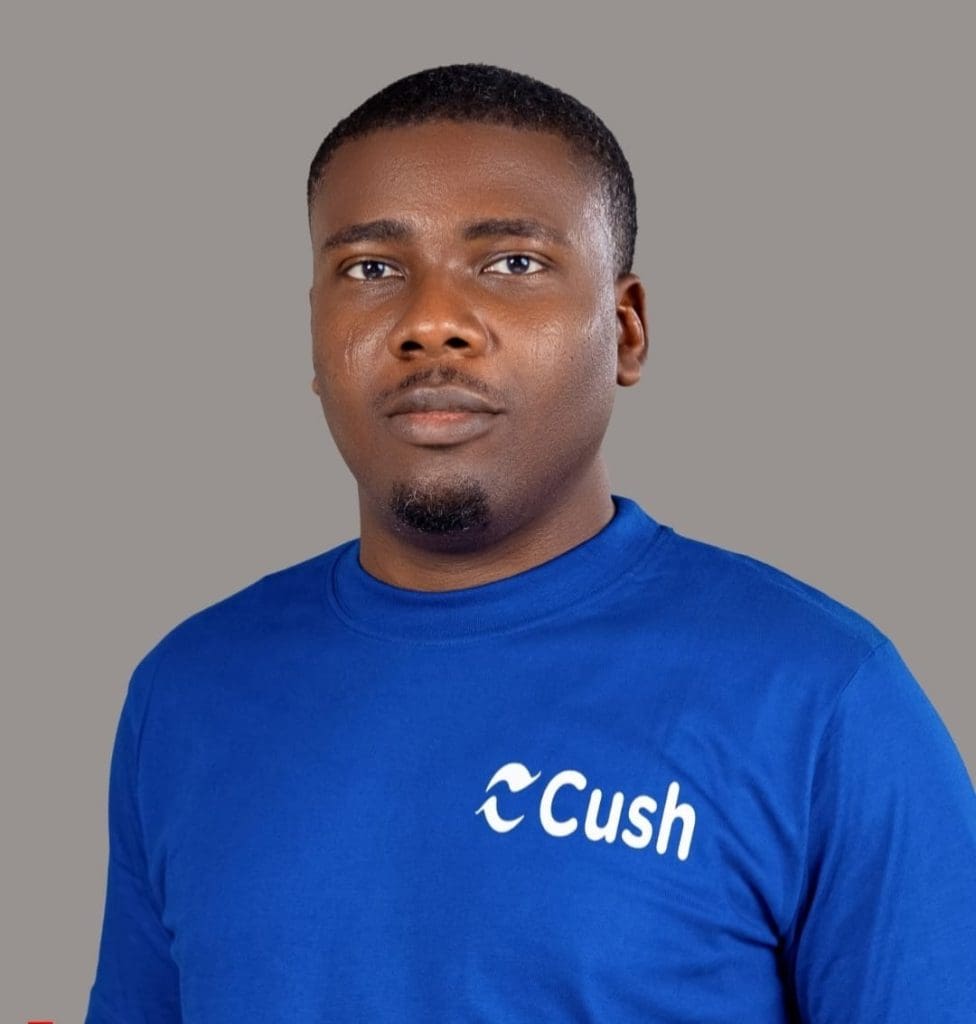

By: Wale Ameen

Wale Ameen is a two-time founder whose work sits at the intersection of technology, governance, and economic inclusion. He is the co-founder and CEO of Cush, a startup building financial infrastructure for the globally mobile. Cush is designed to dismantle the problem of credit invisibility, which affects more than 300 million migrants and expats worldwide, by rethinking how financial trust is created, measured, and transported across borders. Ameen’s leadership reflects a focus on systems change how policy, data, and technology can be aligned to expand access rather than reinforce exclusion.

At the core of Cush’s model is the Cush Passport, a proprietary, portable credit score that converts everyday financial behaviours such as regular remittances and consistent saving into a verifiable global financial identity. By combining high-frequency tools like money transfers with AI-driven guidance via its chatbot, ‘Imisi’, Cush captures transactional data that traditional credit bureaus overlook, enabling users to access housing, fair lending, and essential services as they move across countries. Ameen’s work through Cush reflects a broader commitment to reducing friction in migration and advancing global economic inclusion through practical, scalable solutions.

We need to stop dancing around this issue.

Let’s face it. The biggest risk in AI today isn’t rogue machines or runaway superintelligence. It’s something far more familiar to many of us building systems and playing actively within the AI space: power without accountability.

AI has quietly become decision-making infrastructure in virtually every facet of our lives. It is there, even when you don’t realise it. It influences who gets hired, who gets credit approved, who gets flagged, who gets seen, and who gets ignored. It’s the silent decider in many solutions and yet in far too many cases, no one can clearly answer a basic question:

Who is responsible when it goes wrong? Who gets questioned when AI harms human lives? When you take a deep look at the ecosystem as it stands right now. There’s no clear cut answer to these. And fact is, that’s not a technical oversight. That’s a governance failure.

To Tech CEOs: With Great Power Comes Great Responsibility

If you’re leading a company building or deploying AI at scale, here’s the uncomfortable truth: you’re no longer “just innovating.” You are among the 1% of the world who are shaping social outcomes. This is a huge responsibility.

When your systems automate decisions, you don’t get to outsource responsibility to “the model,” “the data,” or “unintended consequences.” Those explanations may be just enough to satisfy internal meetings but they won’t hold in courtrooms, regulatory hearings, or public opinion.

We’ve seen this movie before in finance, energy, and pharmaceuticals. Industries that insisted regulation would “slow innovation” ended up paying a much higher price when trust collapsed. Here is one truth we need to establish: Accountability isn’t anti-innovation.

It’s how innovation survives scrutiny.

The companies that will endure are not the fastest movers, but the ones that can explain their systems, audit their impacts, and take responsibility when harm occurs. That’s not a weakness. It’s institutional maturity.

To Policymakers: Ethics Is Not Enough

For years, AI governance has leaned heavily on ethics: principles, guidelines, voluntary commitments. These have value, but let’s be clear: ethics without enforcement is not governance.

If companies write their own rules, monitor themselves, and face no meaningful consequences when things go wrong, the result is predictable. Power concentrates. Risks externalise. Harm becomes “collateral damage.”

Real governance answers harder questions: Who has authority to deploy high-risk systems? Who is legally accountable for outcomes? Who can audit AI systems and with what powers? What remedies exist for people harmed by automated decisions? How are we measuring the impact of answers machines hand off to humans? Questions, questions. But without clear answers, regulation becomes a mere theatre where all that happens is speculation and trust is eroded.

“Move Fast” Is a Political Choice

The mantra of “move fast” is often framed as neutral, even inevitable. It’s neither.

Speed without accountability shifts risk downward from corporations to workers, from platforms to users, from developers to society. When AI systems fail, it’s rarely executives who pay the price. It’s individuals denied opportunities, communities over-policed, or citizens misclassified by systems they can’t challenge.

That asymmetry is not accidental. It’s the result of governance gaps. If AI is going to scale, accountability has to scale with it. And everyone from the top must consciously work at it.

Global Impact, Unequal Voice

One more reality deserves attention. Much of the world consumes AI systems designed and governed elsewhere. In the Global South, these technologies often arrive faster than the laws, institutions, or safeguards needed to control them.

That creates a dangerous imbalance: imported technology, exported risk.

If global AI governance doesn’t reflect this reality, we risk reproducing digital colonialism where decisions affecting millions are made far from the people who live with the consequences.

Accountability must travel with technology. Otherwise, trust will fracture along geopolitical lines.

What Responsible Power Actually Looks Like

This isn’t an abstract thought. The path forward is clear and succint: Explicit responsibility: Named entities accountable for AI outcomes not just processes. Independent oversight: External audits with real authority, not box-ticking exercises. Meaningful recourse: The right to challenge, appeal, and seek remedy. Proportionate consequences: The greater the impact, the greater the duty of care. These principles already govern other high-impact sectors. AI should not be the exception.

The Bottom Line

AI is no longer experimental. It is infrastructural and impacting virtually every segment of our lives. And history is unforgiving when power outpaces accountability. When governance lags, harm doesn’t remain theoretical it accumulates, politicises, and eventually forces intervention under crisis conditions.

The question for both CEOs and policymakers is simple: Will accountability be built by design or imposed after failure? Because one way or another, accountability always arrives. The only question is whether we choose it early, thoughtfully, and collectively or late, painfully, and under pressure. AI power without accountability isn’t just risky. It’s a governance failure. And fixing it is no longer optional.

These themes are explored further in my forthcoming book, “Ethical AI: Principles, Power, and the Future of Responsible Intelligence.”