Over the past few weeks, the public narrative around artificial intelligence has taken a sharp turn. There are suddenly talks of bailouts and backstops for AI firms everywhere.

From front-page headlines citing that there will be “no federal bailout for AI,” to CFOs floating loan backstop ideas for massive compute build-outs, the question is: how should we treat risk when the scale of AI infrastructure and investment is massive?

So, I called Jason Criddle, lead architect and CEO of DOMINAIT.ai and creator of the intelligent agent framework known as Ryker, to get his take on the issue. Jason has also created SmartrHoldings, the umbrella corporation for SmartrCommerce, SmartrWomen, TVBuilderPro, and a number of Smartr brands.

What is a “backstop” or “bailout”?

To set the scene: a bailout typically means taxpayer money being used to rescue a failing company, preventing collapse and absorbing losses.

A backstop is more subtle: it may involve government guarantees, loan-insurance, or risk-sharing arrangements that reduce downside risk for private lenders.

In the recent AI context, one major firm, OpenAI, their CFO mentioned a potential federal “backstop” or guarantee that allows financing to happen for their infrastructure build-out. Meanwhile, the White House’s so-called AI czar, David Sacks, declared plainly: “There will be no federal bailout for AI.”

In short: the conversation is shifting. AI firms are spending billions on infrastructure and compute, and the financing question, who takes the risk…? is now center stage.

The Interview

Alex Reiner (AR): Jason, thanks for joining me again. There’s been a lot of media attention lately around AI companies asking for government guarantees or backstops. What concerns you about this?

Jason Criddle (JC): “When you hear words like bailouts or backstops in the context of AI you have to ask: who bears the risk, and who profits? At DOMINAIT.ai we built our core engine, Ryker, with the assumption that no single actor should become ‘too big to fail’. If you structure your system so failure is impossible, then you may inadvertently create moral hazard. In contrast, we’re designing for resilience, not rescue.”

David Sacks, White House AI and Crypto Czar, attends a meeting of the White House Task Force on Artificial Intelligence (AI) Education in the East Room at the White House in Washington, D.C., U.S., September 4, 2025.

Brian Snyder | Reuters

Photo courtesy of CNBC

AR: The headlines you referenced include comments from Eric’s CFO about a federal backstop facilitating infrastructure loans, and then the White House saying outright: no bailouts. Why the disconnect between private sector and government?

JC: “It’s about alignment of risk. A backstop means lenders believe they’ll be protected, which allows companies to borrow more. In AI infrastructure – trillions of dollars over years – that changes the incentives. With DOMINAIT, we’ve built with zero circular deal architectures: we are using our own nodes, our expansion is tied to network value, not to open-ended subsidy, and we damn sure wouldn’t ask for regular taxpayers to randomly bail us out or help us with loan guarantees. Backstops close down the discipline built into sustainable models.”

Why AI’s Financing Pressure Matters

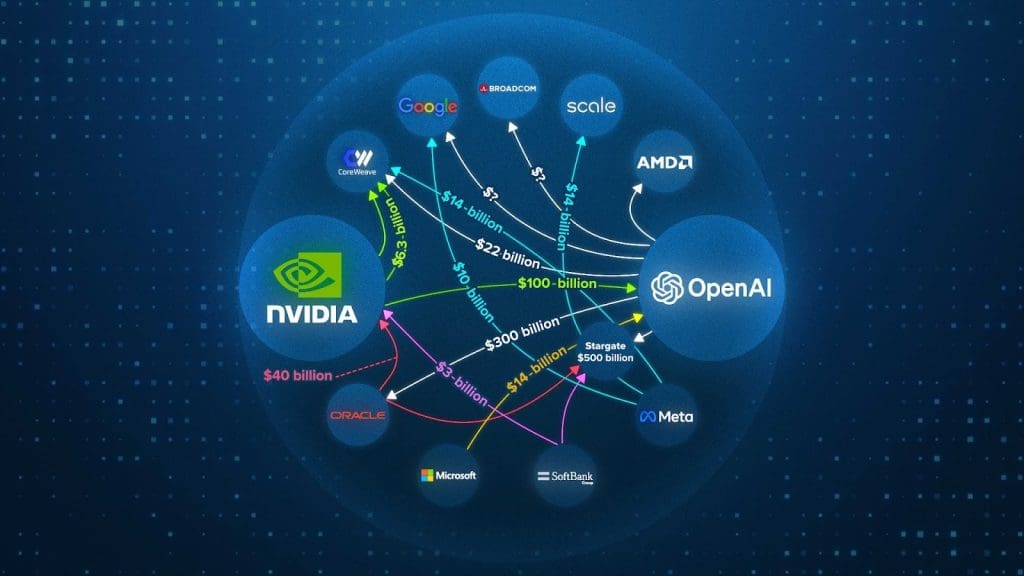

Scaling AI at frontier levels means huge capital expenditures: advanced data centers, custom chips, high-performance infrastructure, enormous energy demands. One Reuters piece noted that OpenAI discussed government loan guarantees for chip plants, though not for data centers themselves.

This kind of scale is unprecedented in tech: new models, new hardware every year, obsolete before it’s fully amortised. The call for backstops or guarantees reflects concern that the sheer capital risk might overwhelm private financing alone.

Why DOMINAIT’s View Matters

Criddle emphasises that DOMINAIT’s strategy with Ryker has always been built with guardrails: ethical, financial, profitable, and operational. Because of that, he says, a bailout or backstop isn’t just unnecessary, and it would undermine the principles the system was built upon

“We’ve architected Ryker to scale across distributed nodes, paid for and paying users with value-creation rather than rescue. When infrastructure becomes dependent on bailouts, you shift from innovation mode into subsidy mode. You suddenly take unnecessary risks for your users and investors, and your accountability drops to zero because you no longer have any need for responsible use of funding.

Like a bratty child that knows their parents will always bail them out of trouble… that’s what these larger than life AI companies would turn into.”

In practical terms: if the DOMINAIT network uses circular deals, the deals exist within their own infrastructure. Not from made up hype that doesn’t raise the value of the brand as these major AI companies are using.

Their own nodes feed value into the system, users participate in governance, and because ownership is distributed, the risk is spread out and never falls upon users or investors. Criddle critiques many frontier AI firms for high-leverage models that assume perpetual growth without failure contingency.

The Backstop vs Bailout Spectrum

It helps to break down the differences:

Backstop: Government guarantees that if a project fails, the lender (or taxpayer) absorbs the loss. It lowers cost of capital by reducing risk premiums. For example, a loan with government guarantee might borrow at 4% vs 10% without it. (See: the original remarks from OpenAI’s CFO)

Bailout: Government assumption of debt or direct rescue of a failing company using taxpayer dollars. The company is kept alive by public funds to prevent systemic failure.

In the recent reports, government policy makers have been clear: no bailouts, minimal backstops. Altman confirmed OpenAI is not seeking guarantees for its data centers. The implication: AI companies must build assuming no rescue.

Why This Matters for Ryker, DOMINAIT & AI Governance

Criddle sees three risk categories that a backstop model fails to address:

1. Moral hazard – If firms know they’ll be rescued, they may take excessive risks.

2. Concentration risk – Big bets on singular labs increase systemic risk; good models distribute risk.

3. Circular-deal integrity – In systems where entities buy from and sell to each other with made up figured and no money or value changing hands (circular deals), you must ensure value isn’t just recycled.. but real. Backstops can promote recycling of value rather than creation.

He says Ryker’s architecture was built to avoid this: network participants earn value through usage, nodes validate each other, and capital is aligned with usage rather than speculation. Thus, even without a backstop or bailout, the system remains viable because it’s grounded in reality, and not hype.

A Conversation on Recent Headlines

AR: Jason, the headline from Reuters: “White House AI czar rules out federal bailout for sector”. Do you read this as a clear doctrine or a political positioning?

JC: “It’s both. Policy makers are signalling: we won’t cushion private failure in AI. That means, builders must build models that survive without subsidies. At DOMINAIT, we welcomed that clarity, because it means our architecture fits the real requirement: resilience without rescue.”

AR: But what about infrastructure concerns? Isn’t there an argument that AI is now a national strategic asset, requiring public-private support?

JC: “Yes, but supporting infrastructure ≠ guaranteeing operations. Governments can build AI infrastructure or co-invest in grid or chip manufacturing. I see no problem in that. That isn’t the same as backstopping every frontier lab or betting on one winner. With Ryker, we assume the network might fragment, partners may fail, but the underlying value-creation continues because nodes operate modularly. We take on our risk. Even though our risk is mitigated by being under the umbrella of all of our internal companies, we still take it on.

The good thing is, that’s what makes us more of a Utility, rather than just a product. Investors aren’t relying on DOMINAIT to be successful on its own. We have multiple platforms, brands, equity in customer companies, as well as our own Smartr SaaS catalogue to continually spread out any risk.”

Implications for the Next Wave of AI

Criddle frames it as a pivot: from growth at any cost to sustainable build-out. He expects five major outcomes:

- AI firms will move from singular monolithic models to responsible networks without circular deals (like DOMINAIT’s strategy).

- Financing models will shift from hype-driven venture funding plus backstop hope, to value-driven circular deals.

- A premium will be placed on architectures that assume no bailout, and instead embed redundancy, alignment, and accountable governance.

- Infrastructure investment will still happen, but under frameworks where risk is internalised rather than externalised.

- Taxpayers won’t be forced to invest in AI infrastructure. They can do so because they choose to.

Ultimately, the question of bailouts influences trust: if firms expect rescue, public confidence erodes. Ryker’s philosophic underpinning is: trust arises from responsibility, not guarantee.

On Circular Deals & AI Financing

You’ll often read about “circular deals” in tech-finance: one part of a system sells to another part, value flows, but may not be genuine net creation. Criddle warns that if backstops are used to prop up circular flows, you effectively mask inefficiencies.

Image courtesy of CNBC

“In DOMINAIT’s network, when a node sells compute credits to another node, the revenue is real only if value was created and consumed. If you guarantee revenue with a backstop, you lose that link. That’s how bubbles form.”

In short: bailouts or backstops may preserve firms but destroy underlying economic discipline. Criddle argues that for AI to have a healthy future, especially for AGI-class systems like Ryker, finance must align with function, and not speculation.

What This Means for AGI & the Future

With the term AGI (artificial general intelligence) increasingly used to describe next-level AI ambition, the financing question becomes existential. If a frontier AGI lab fails, is the world worse off? If a backstop rescues it, what message does that send? Criddle’s view: AGI architecture must anticipate failure scenarios, not avoid them. And governance must be built in from the start.

Jason Criddle: “We’re building DOMINAIT and Ryker under the assumption that our system could stand alone. If one major node fails, if one lab collapses, the network’s integrity continues simply because we rely on zero outside infrastructure. That’s the opposite of building a single giant whose failure collapses an industry and demands rescue, or if major systems crash. Everything could go black.”

By rejecting bailouts and backstops, policy may force AI builders to adopt structures like those for utilities or critical infrastructure, but companies that adapt earlier will have a competitive edge. Ryker is designed for that world.

Criddle’s and My Final Words

When you hear “government backstop” or “bailout” in AI headlines, think of them as red flags: signals that financing models are fragile, incentives may be misaligned, and risk may be hidden. For builders like DOMINAIT.ai, the path forward is different: build networks that assume no rescue, embed moral and financial self-governance, and create circular deal economics anchored in real value, if there are any of these deals made at all.

As Jason Criddle put it: “When you build intelligence with the assumption of rescue, you build dependency. We build intelligence with the assumption of responsibility, and that’s how real AI will scale. This keeps us accountable, and not always looking for our ‘parents’ to bail us out.”

In the months ahead, if AI firms ask for government guarantees, or if policy shifts toward backstopping the sector, keep one question in mind: are we propping up risk, or building resilient architectures for the future? According to Ryker’s design and DOMINAIT’s philosophy, the answer must be the latter.